Understanding Quantum Cryptography: The Key to Digital Trust

Quantum computing is moving into the real world. What used to stay in labs now affects policy, creates startups, and threatens digital trust. In the near future, powerful quantum machines could break common public-key cryptography, posing a big cybersecurity risk.

Today’s security—RSA and ECC—relies on math that classical computers can’t solve quickly. Cryptographically relevant quantum computers (QRQCs) would undo that, letting attackers decrypt protected data.

“Y2Q” (years to quantum) echoes Y2K but is different: Y2K had a clear deadline and known effects; Y2Q’s timing and impact are unknown. Q-day could arrive suddenly, so data stored now might be readable later.

Sorry, But AI Deserves ‘Please’ Too!

Large language models (LLMs) and AI chatbots are now integral to work and personal life, changing how we access information, get advice, and conduct research. They deliver rapid, efficient insights and solutions, prompting reassessment of AI’s role in daily routines. As reliance on AI for understanding and generating natural language grows, important questions arise about impacts on human autonomy, creativity, and connection—challenging us to balance the benefits of speed and assistance with preserving human judgement, originality, and social bonds.

Exploring Quantum User Experience: Future of Human-Computer Interaction

In the digital era, evolving technology demands more fluid and personalized user experiences, challenging traditional Human-Computer Interaction (HCI) models. The Quantum User Experience (QUX) framework inspires a shift towards adaptable, probabilistic user engagement, drawing parallels with quantum mechanics. This approach fosters real-time responsiveness, meeting users' dynamic needs in an interconnected landscape.

Rethinking Reality: The Unwritten Story of Time

The narrative of the universe is dynamic and evolving, challenging traditional views of time as predetermined. Einstein's relativity and quantum mechanics present opposing perspectives, with determinism clashing against indeterminism. Nicolas Gisin’s intuitionist approach suggests that time creates new information, emphasizing an open future and a participatory reality shaped by experiential unfolding.

Exploring Quantum Computing and Wormholes: A New Frontier

Recent developments in quantum gravity and teleportation suggest that wormholes may exist, derived from quantum entanglement principles. Theoretical work by Maldacena, Susskind, and others links these ideas, challenging our understanding of space-time. Notable experiments, including those utilizing the SYK model, demonstrate tangible outcomes related to quantum teleportation, blurring the line between science fiction and reality.

Beyond Barriers: How Quantum Tunneling Powers Our Digital and Cosmic World

Quantum tunneling is a fundamental phenomenon influencing both cosmic and digital realms. It facilitates stellar fusion, enabling energy output from stars, while also driving advancements in electronics by allowing electrons to navigate barriers in devices like SSDs and tunnel diodes. This duality showcases quantum mechanics as a vital force in modern technology.

Cultivating Optimism: A Skill for Success

Optimism is a transformative mindset that encourages individuals to view challenges as opportunities for growth. It fosters creativity, resilience, and collaboration, allowing people to navigate uncertainty with confidence. By replacing fear with optimism, we enhance our potential for success and build supportive environments that promote personal and professional development.

Quantum Revolution: How Max Planck Tapped Into the Universe’s Zero-Point Mysteries

Max Planck's exploration of light bulb efficiency led to the groundbreaking discovery of energy quantization, forming the foundation of quantum theory. His work resolved the black body problem, revealed zero-point energy, and showcased a universe in constant motion. This insight reshapes our understanding of reality, emphasizing perpetual change and the interconnectedness of all existence.

Transformative Discovery: Integrating Coaching Principles for Project Success

The Human-Centered Approach to Discovery emphasizes coaching in gathering insights rather than relying on formal interviews. By fostering genuine conversations through active listening and curiosity, deeper underlying issues are revealed, leading to better design decisions. This collaborative method enhances alignment, engagement, and ownership, ultimately transforming workplace dynamics and project outcomes.

Understanding Agentic AI: Key Insights for Retail Leaders

Agentic AI refers to sophisticated autonomous systems in retail that can understand goals, reason, and act independently. Current implementations like Adaptive Workflow Orchestration (AWO) often confuse automation with autonomy, leading to misaligned expectations. Retailers must differentiate between stages of automation to ensure proper governance and readiness for future agentic ecosystems.

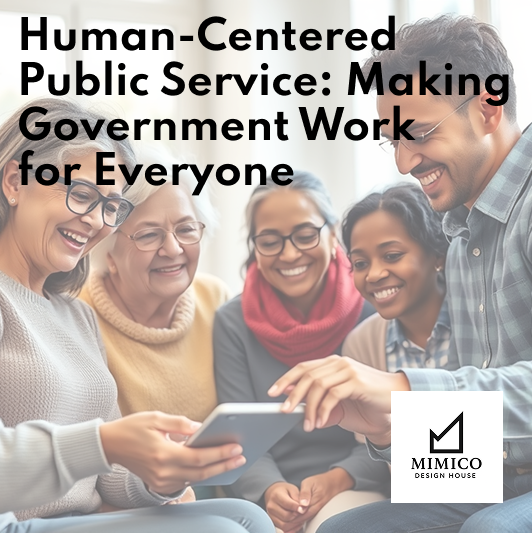

Human-Centered Public Service: Making Government Work for Everyone

Government digital services significantly impact daily life but often frustrate users due to outdated designs and accessibility issues. Applying human-centered design can yield user-friendly resources, improve service delivery, and ensure accessibility from the outset. Effective public design is often invisible, enhancing user experience by eliminating obstacles without drawing attention to the changes made.

Exploring the Implications of Quantum Collapse on Computing

The measurement problem in quantum mechanics significantly influences the advancement of quantum computing. It involves superposition and wave function collapse, where measurements reduce multiple possible states to a single outcome. Addressing the complexities of measurement is crucial for reducing errors in quantum algorithms, fundamentally impacting their success and efficiency in practical applications.

Quantum Entanglement: 'Spooky Action at a Distance'

In 1935, Albert Einstein, Boris Podolsky, and Nathan Rosen published a paper addressing the conceptual challenges posed by quantum entanglement [1]. These physicists argued that quantum entanglement appeared to conflict with established physical laws and suggested that existing explanations were incomplete without the inclusion of undiscovered properties, referred to as hidden variables. This argument, later termed the EPR argument, underscored perceived gaps in quantum mechanics.

Transforming Data into Actionable Insights through Design

At the age of fifteen, I secured a summer position at a furniture factory. To get the job, I expressed my interest in technology and programming to the owner, specifically regarding their newly acquired CNC machine. To demonstrate my capability, I presented my academic record and was hired to support a senior operator with the machine.

That summer, I was struck by the ability to control complex machinery through programmed commands on its control board. The design and layout of the interface, as well as the tangible results yielded from my input, highlighted the intersection of technical expertise and thoughtful design. This experience sparked my curiosity about the origins and development of such systems and functionalities.

The Quantum Realm: Our Connection to the Universe

When we close our eyes and place our hand on our forehead, we perceive the firmness of our hand and the gentle warmth of our skin. This physical sensation, the apparent solidity and presence of our body, seems tangible and reassuring. However, at the most fundamental level, our bodies are composed almost entirely of empty space. Beneath the surface of our bones, tissues, and cells, we find that our physical form is constructed from atoms, which themselves are predominantly made up of empty space, held together by the invisible forces of electromagnetism. The idea that we are, in essence, built from empty space can feel unsettling, yet it is central to our understanding of quantum mechanics.

Atom Loss: A Bottleneck in Quantum Computing

Until recently, quantum computers have faced a significant obstacle known as ‘atom loss’, which has limited their advancement and ability to operate for long durations. At the heart of these systems are quantum bits, or qubits, which represent information in a quantum state, allowing them to be in the state 0, 1, or both simultaneously, thanks to superposition. Qubits are formed from subatomic particles and engineered through precise manipulation and measurement of quantum mechanical properties.

Bringing Ideas to Life: My Journey as a Product Architect

Lately, I have been reflecting on what drew me, as a designer, to write about topics such as artificial intelligence and quantum computing. I have been fascinated with both topics and how they have transformed that way we view the world. Everything we see today in terms of advancements in AI and quantum computing started with an idea, brought to life through innovation and perseverance.

Quantum Computing: Revolutionizing Industry and Science

Quantum computing harnesses quantum mechanics principles like superposition and entanglement to revolutionize fields such as pharmaceuticals and logistics. By enabling rapid computations and improved learning, it holds promise for breakthroughs, including artificial general intelligence. Ongoing investments aim to develop reliable quantum systems with the potential to transform industries significantly.

The Principles of Quantum Computing Explained

Quantum computing has transitioned from theory to reality, with various companies developing mainstream hardware. It utilizes qubits, enabling superposition, entanglement, and interference to solve complex problems differently than classical computers. Advances in superconducting processors drive the technology forward, while researchers address challenges in scaling and error correction for practical applications.

Applying the EPIS framework to Dashboard Design and Implementation

The EPIS framework outlines a four-phase approach—Exploration, Preparation, Implementation, and Sustainment—designed to create user-centered dashboards. This methodology emphasizes understanding user needs, ensuring data clarity, and promoting sustainability. By iteratively evaluating and refining dashboard designs, EPIS supports effective decision-making and enhances user experience across diverse applications.